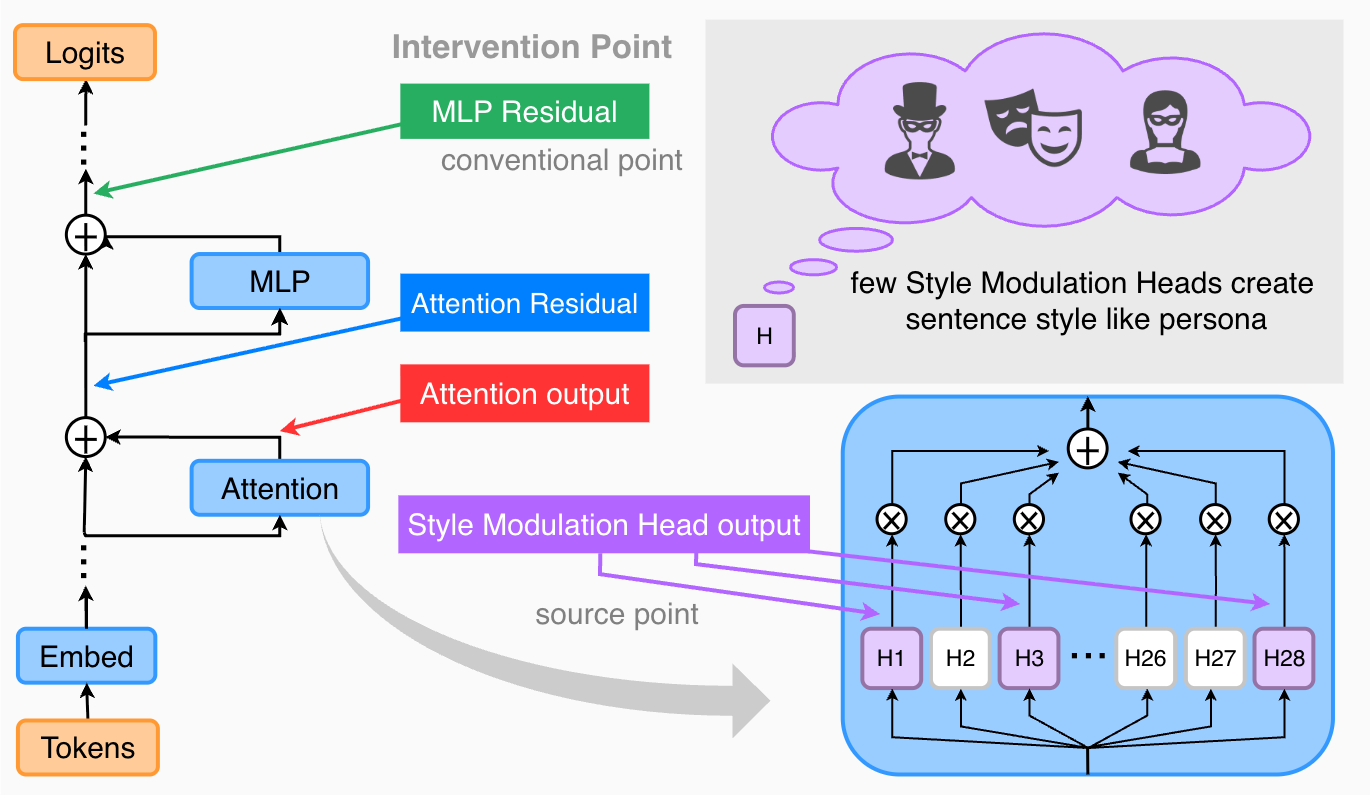

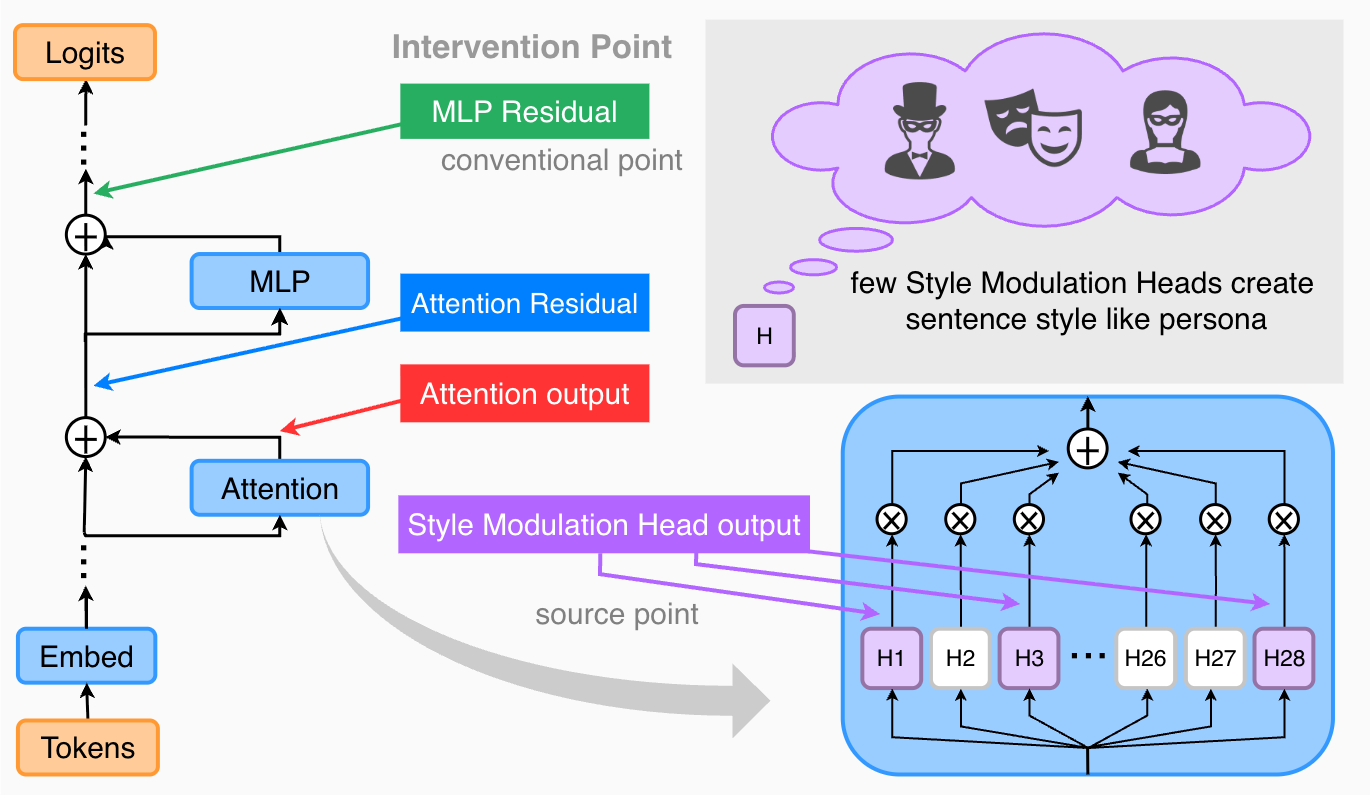

Steering at the Source: Style Modulation Heads for Robust Persona Control

Yoshihiro Izawa, Gouki Minegishi, Koshi Eguchi, Sosuke Hosokawa, Kenjiro Taura

First AuthorICLR 2026 Workshop

The University of Tokyo, Graduate School of IST, M1

Yamakata Laboratory

Mechanistic Interpretability

My primary research area is Mechanistic Interpretability — understanding the internal mechanisms of Large Language Models (LLMs). I work on improving model interpretability and controllability through techniques such as Activation Steering.

Yoshihiro Izawa, Gouki Minegishi, Koshi Eguchi, Sosuke Hosokawa, Kenjiro Taura

Coming Soon...